U.S. Scraping Limits, API Access Controls, and National-Security Actions Are Reshaping AI Training Data Sourcing (and Fair Use)

Federal enforcement, evolving case law, and national-security orders are tightening legal rules around AI training data. This guide covers scraping limits, API terms enforcement, copyright risks, and export-control issues for companies building or fine-tuning AI models.

AI training data sourcing is shifting from a pure feasibility question (“can we get it?”) to an authorization and defensibility question (“should we — and are we allowed to?”). Copyright and fair use still matter, but they now intersect with tighter API programs, stricter terms of service, and technical access controls that can turn a crawl into a contract or access dispute. In parallel, national-security and foreign-adversary concerns increasingly show up in diligence conversations about datasets, vendors, and where data is stored and accessed. This guide is a practical checklist for founders, product teams, and counsel: a decision framework for sourcing choices, a playbook for permission-first alternatives, and a documentation checklist you can hand to investors, partners, or regulators.

For policy background on the U.S. government’s direction of travel, see Breaking Down the Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence. For the data/national-security intersection, see PADFA: Implications and Impacts on Data Regulation and High-Tech Startups.

TL;DR for builders

- Expect access gates to shape what’s “defensible.” APIs, TOS, auth walls, rate limits, and bot detection can define your real risk posture before any court reaches fair use.

- Make provenance and permissions product requirements. Treat source logs, terms snapshots, and permission basis as first-class engineering artifacts — not cleanup work after a demand letter.

- Build a national-security screen early. Bake cross-border access, sensitive-data flags, and restricted-counterparty checks into data/vendor (and sometimes investor) decisions.

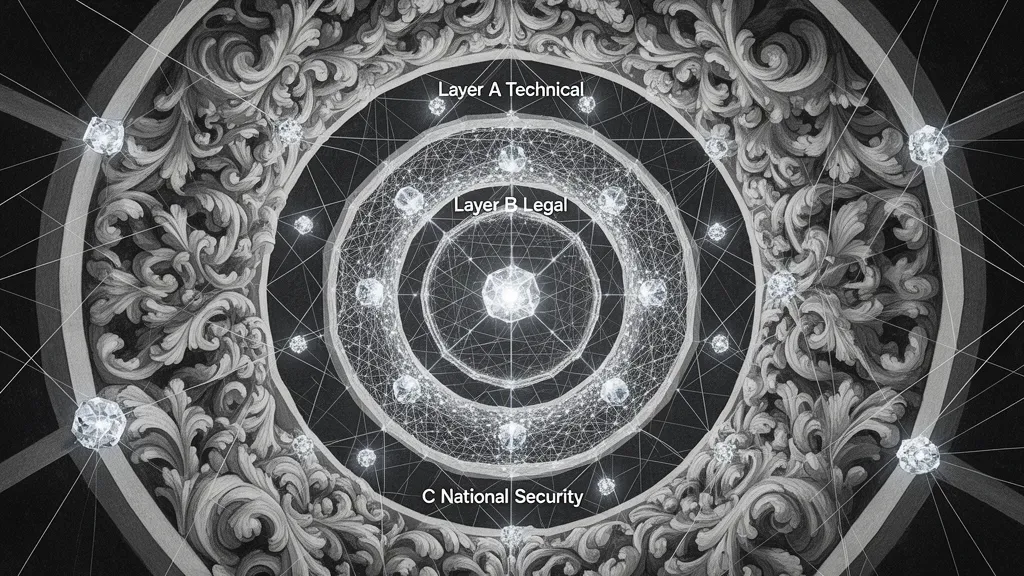

What’s changed in the U.S.: three constraint layers now shape training datasets

In the U.S., training-data sourcing now runs through three overlapping constraint layers. Teams that treat the “public web” as a single bucket tend to miss how quickly a defensible pipeline can turn into a revocation, dispute, or diligence red flag.

Layer A — Technical/access constraints. Expect more friction: robots.txt directives, paywalls, authentication walls, rate limits, bot detection, and emerging content controls (including watermarking and other anti-extraction signals). The practical impact is that “publicly accessible” is shrinking, and your collection method leaves artifacts (logs, IP ranges, account keys) that make the story of how you obtained data easier to reconstruct later.

Layer B — Legal constraints (copyright + contract + computer access). Copyright and fair use remain central, but they are no longer the only gating issue. API terms and website TOS can create contractual liability even where a fair use argument might otherwise be plausible. Separately, “unauthorized access” theories (often fact-dependent) can become part of the threat model when a team bypasses technical measures or ignores explicit restrictions.

Layer C — National-security constraints. Dataset sourcing and model training can be treated as strategic assets subject to heightened scrutiny: CFIUS diligence, executive-branch actions, restricted counterparties, and “sensitive data” categories. This is one reason to operationalize a cross-border and counterparty screen early (see PADFA implications for startups for context).

Mini-scenario: A startup assumes “public web = fair game,” builds a crawler, and relies on an official API key for throughput. The platform detects downstream ML use, revokes the key, and sends a demand letter pointing to the API terms. What they should have done at design time: choose a permission basis per source (license/terms/statutory/exclude), implement provenance logging and terms snapshots, and set a hard rule against circumvention without legal sign-off.

How scraping limits and API controls change the real-world fair use posture (even before the court reaches fair use)

Fair use analysis often starts too late. A better framing for builders is: not only “is training fair use?” but “how did we obtain the data, and what did we agree to?” If a source is accessed via an API key, a login, or click-through terms, your biggest exposure may be contract and access-control claims — long before a judge ever reaches the four factors.

- Purpose/character: access controls can make your use look more like commercial productization than open research, especially if your product substitutes for the source’s core offering.

- Nature of the work: controls tend to tighten around news, books, images, and code — categories where rights-holders argue stronger creative-market sensitivity.

- Amount/substantiality: whole-work ingestion is common in ML; mitigate with documented selection/filters and an internal rationale for why the scope was necessary.

- Market effect: paywalls and ML-specific API prohibitions strengthen “lost licensing market” narratives and can make substitution arguments feel more concrete.

Operational reality: “contract eats fair use.” API terms, clickwrap/browsewrap, and rate-limit or bot-detection circumvention can dominate the risk analysis because the dispute becomes “you promised not to” rather than “copyright permits it.” The practical control is to map each source to a permission basis: licensed, permitted by terms, statutory/public domain, or excluded (no clear permission).

Mini-scenario: Your team uses an official API that expressly restricts downstream ML training, but you train anyway because “it’s just embeddings.” When the key is revoked, you lose data continuity and trigger legal escalation. A better design is to (1) isolate “API-only” corpora and enforce use restrictions at the dataset level, (2) negotiate for training rights (or switch to licensed/open sources), and (3) keep an audit pack with terms snapshots and approvals. For deeper background on fair use considerations in model training, see Generative AI Training — Copyright and Fair Use.

National-security measures (CFIUS and executive actions) are now part of dataset and model diligence

U.S. “national security” is no longer only a defense-contractor issue. For AI companies, it increasingly shows up as a diligence layer around what data you train on, who touches it, and where it flows — especially when sensitive categories or cross-border operations are involved.

When national security shows up in training-data decisions:

- Sensitive personal data (e.g., geolocation, biometrics, health, financial identifiers) and large-scale datasets that could enable surveillance, profiling, or coercion risk.

- Critical infrastructure / defense-adjacent domains and dual-use capabilities (where model improvements can be framed as strategic).

- Cross-border transfers and access: where data is stored, which affiliates/contractors can access it, and whether investors/partners create a foreign-control narrative.

What CFIUS can change (practically): transactions can be delayed, restructured, or unwound; mitigation can require data localization, restricted access, governance controls, and audits. This matters even if you are “just training a model,” because datasets and training pipelines can be treated as strategic assets rather than ordinary IT inputs.

Executive actions and agency signals: procurement restrictions, reporting expectations, safety/security evaluation norms, and restricted-entity lists shape what customers and later-stage investors will expect. One operational move: build a national-security screen into vendor onboarding (labeling firms, cloud/storage, data brokers) and partnership review (who gets model access, where inference happens, and who administers keys). For a data-regulation angle that overlaps with these concerns, see PADFA implications.

Mini-scenario: A startup takes funding tied to offshore labeling and stores training data in a region chosen for cost. Later, enterprise customers and investors ask for data residency, access controls, and subcontractor lists — slowing deals. The “do instead” playbook is to (1) pre-approve geographies and subprocessors, (2) segment sensitive datasets, (3) document access and key management, and (4) negotiate vendor terms that support localization and auditability.

A defensible dataset sourcing playbook (what to do instead of hoping fair use saves you)

A defensible sourcing strategy is built on repeatable choices and records. The goal is to ensure you can explain, source-by-source, why you were permitted to use the data and how you constrained risk — without relying on a last-minute fair use argument.

Step 1 — Classify intended use and outputs. Document whether you’re building a foundation model, a domain model, or RAG-only; and whether the activity is training, fine-tuning, or evaluation. These distinctions drive different exposure profiles (e.g., memorization risk, redistribution concerns) and different documentation needs.

Step 2 — Choose a sourcing lane (and write down the rationale). Common lanes include: (a) licensed sources (publishers, stock providers, brokers), (b) openly licensed content (e.g., Creative Commons/open data) with attribution and terms compliance, (c) first-party/user-provided data with clear permissions, (d) public domain/government data (confirm status and mixed-rights issues), and (e) synthetic/simulated data (useful, but not a cure-all).

Step 3 — Build provenance as a system. Implement source logs, rights metadata, versioning, “do-not-ingest” lists, and deletion/quarantine capability. “Audit readiness” means you can produce a dataset manifest showing permission basis per source and the terms/version in effect at collection time.

Step 4 — Design for minimization and separation. Avoid collecting what you can’t justify, segregate sensitive datasets, and enforce access controls so restrictions are real in practice, not just in policy.

Mini-scenario: “We bought a dataset,” but you can’t show chain of rights (or whether it included restricted/API-sourced content). The fix is vendor diligence plus contract cure tools: reps/warranties on provenance and training rights, audit rights, indemnity structure, and a recall/deletion obligation if a portion is later found to be unauthorized.

Practical risk matrix: common training-data routes and how to reduce exposure

Use this matrix to sanity-check your sourcing approach. The same model can be “legally thoughtful” or “high-risk” depending on the route you used to obtain inputs and the controls you can prove.

- Route 1: Direct web scraping. Risks: TOS breach, access-control circumvention allegations, copyright claims, reputational fallout. Risk reducers: permission-first targets, respect robots/rate limits, source allowlists, and a legal review gate for 401/403/CAPTCHA or paywall signals.

- Route 2: Official APIs (platform/publisher/social). Risks: explicit ML prohibitions, key revocation, audits, downstream use restrictions. Risk reducers: negotiate terms, isolate “API-only” datasets with technical enforcement, and retain proof of permitted use (terms snapshots + approvals).

- Route 3: Third-party “ready-to-train” datasets. Risks: unclear provenance, indemnity gaps, restricted elements, cross-border issues. Risk reducers: reps/warranties on rights and sourcing, audit rights, DPAs/security addenda, and diligence questions like “what were the original sources?” and “any API-derived content?”

- Route 4: First-party and user-contributed data. Risks: consent scope mismatch, privacy/security obligations, retention/deletion duties, model inversion concerns. Risk reducers: clear notices/consents, opt-outs, governance and red-team testing, and a deletion workflow that reaches training datasets.

- Route 5: Public domain / government data. Risks: mixed-rights compilations, sensitive fields, and usage restrictions despite “public” status. Risk reducers: a verification workflow and exclusion of sensitive elements.

- Route 6: Synthetic data. Risks: leakage from source data, utility limits, false confidence. Risk reducers: document generation method, validate non-memorization, and keep separation from restricted corpora.

How to use this matrix: pick a primary lane you can defend, define a fallback if access is revoked, and write down your red lines (e.g., no paywalls/CAPTCHA solving; no sources with ML-prohibited terms; no unclear provenance).

Contracts, controls, and records: the compliance toolkit that makes your story credible

In practice, “defensible training data” is less about having the perfect legal theory and more about having a credible paper trail backed by enforceable controls. If you can’t show what you used, under what permission, and how you enforced restrictions, you will struggle in partner diligence, investor diligence, and disputes.

Contract checklist (vendors, APIs, partners):

- Rights grant scope: explicitly cover training, fine-tuning, evaluation, embeddings, and derivatives (and whether outputs can be commercialized).

- Provenance + permissions reps: the supplier should represent it has rights to grant the license and that sourcing complied with applicable terms/laws.

- Indemnities: define what claims are covered, caps, and who controls defense/settlement.

- Audit + recall/deletion: rights to verify and obligations to cure, replace, or delete/recall tainted data.

- Security + subprocessors: minimum controls, subcontractor limits, and incident notice timelines.

- Cross-border terms: localization, access restrictions, and transfer mechanisms where needed.

Engineering controls that support the legal posture: access logs and key management; dataset quarantines; training run traceability (which dataset versions fed which runs); and a takedown/data-removal workflow tied to incident response.

Recordkeeping / audit pack: keep a dataset manifest listing sources and a permission basis per source, terms/version snapshots, vendor contracts, version history, and internal risk approvals.

Mini-scenario: a partner asks, “Prove you have rights to train.” A good audit pack answers in one folder: (1) what datasets were used, (2) the governing permission documents, and (3) the technical controls showing you honored restrictions. For fair use background that often informs the “permission basis” memo, see Navigating AI Copyright & User Intent.

Actionable next steps:

- Inventory your current datasets and tag each source with a clear permission basis (license, terms, statutory/public domain, or excluded).

- Freeze ingestion from sources with unclear terms or provenance until reviewed and documented.

- Add a vendor diligence questionnaire and contract addendum that expressly covers training rights and provenance.

- Implement provenance logging and dataset versioning so you can reproduce (or exclude) specific sources.

- Run a national-security screen for sensitive data categories and cross-border storage/access.

- Schedule a legal review of your scraping/API workflows and training-data agreements.

Contact Promise Legal if you want a training-data sourcing audit, API/TOS workflow review, or vendor contract package designed for AI training rights, provenance, and cross-border risk.